GitHub - NVIDIA/Milano: Milano is a tool for automating hyper-parameters search for your models on a backend of your choice.

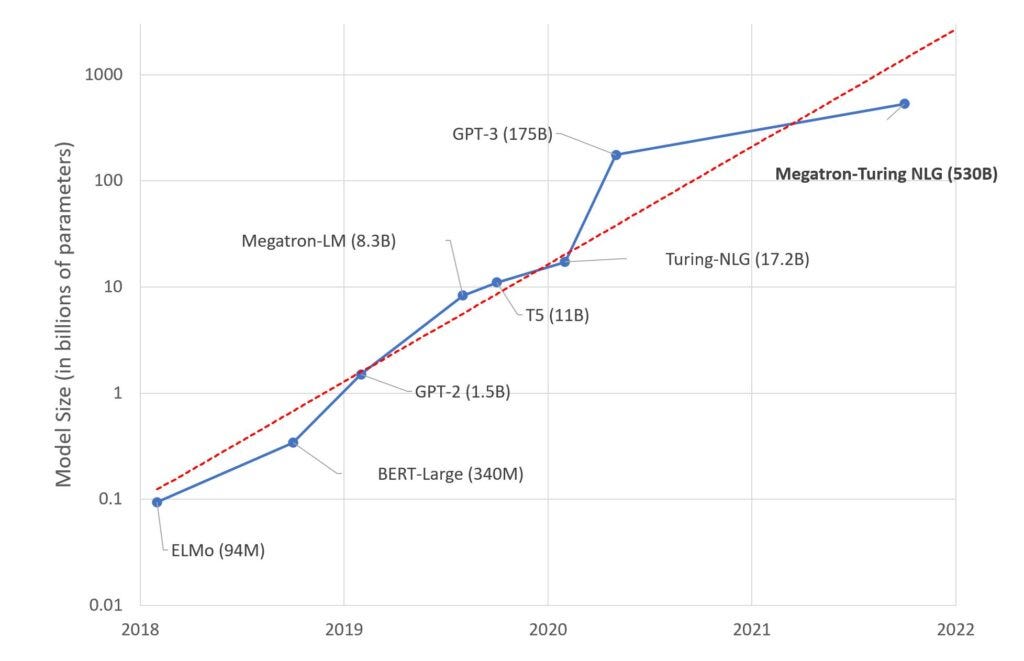

NVIDIA, Microsoft Introduce New Language Model MT-NLG With 530 Billion Parameters, Leaves GPT-3 Behind

NVIDIA, Stanford & Microsoft Propose Efficient Trillion-Parameter Language Model Training on GPU Clusters | by Synced | SyncedReview | Medium

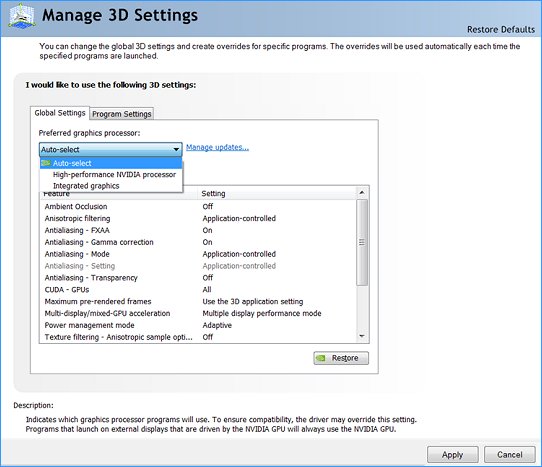

![Panneau de configuration Nvidia] Gérer les paramètres 3D sur le forum Hardware - 29-07-2016 14:37:36 - jeuxvideo.com Panneau de configuration Nvidia] Gérer les paramètres 3D sur le forum Hardware - 29-07-2016 14:37:36 - jeuxvideo.com](https://image.noelshack.com/fichiers/2016/30/1469795735-gerer-les-parametres-3d.jpg)

Panneau de configuration Nvidia] Gérer les paramètres 3D sur le forum Hardware - 29-07-2016 14:37:36 - jeuxvideo.com

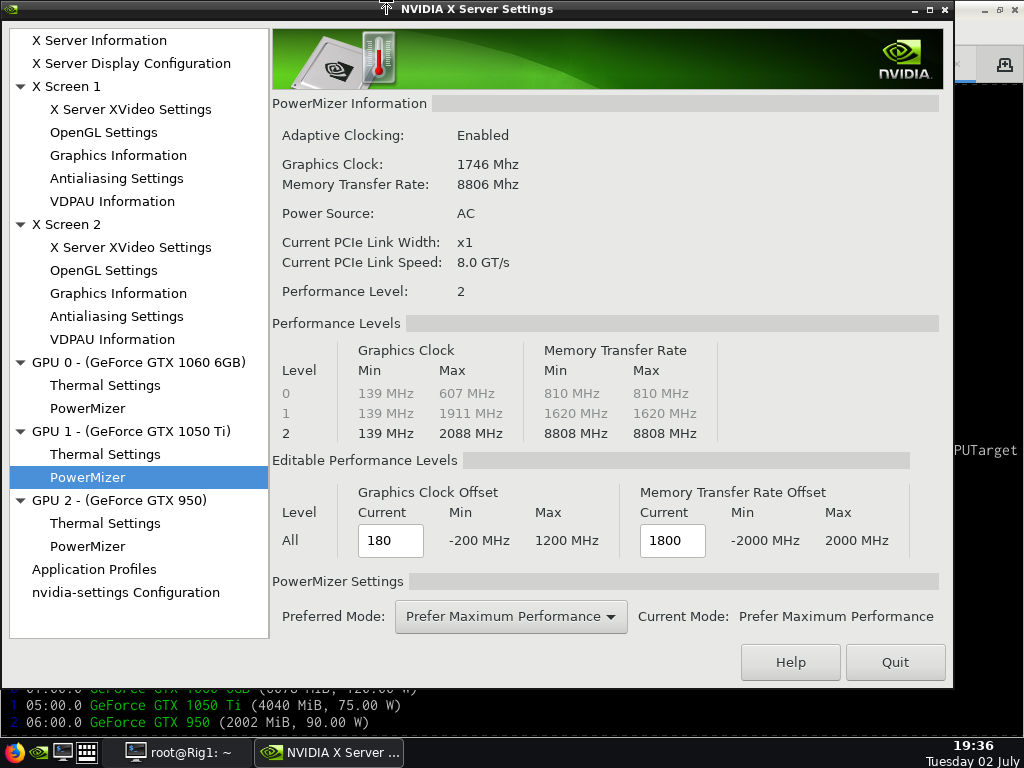

NVIDIA OC parameters does not work - Nvidia Cards - Forum and Knowledge Base A place where you can find answers to your questions | Hive OS

ZeRO-Offload: Training Multi-Billion Parameter Models on a Single GPU | #site_titleZeRO-Offload: Training Multi-Billion Parameter Models on a Single GPU

Microsoft and Nvidia create 105-layer, 530 billion parameter language model that needs 280 A100 GPUs, but it's still biased | ZDNet

Deriv Twitterissä: "Petit tip pour les utilisateurs de carte graphique NVIDIA, pensez à aller faire un tour dans le panneau de config NVIDIA (clic droit sur le bureau) > Régler les paramètres

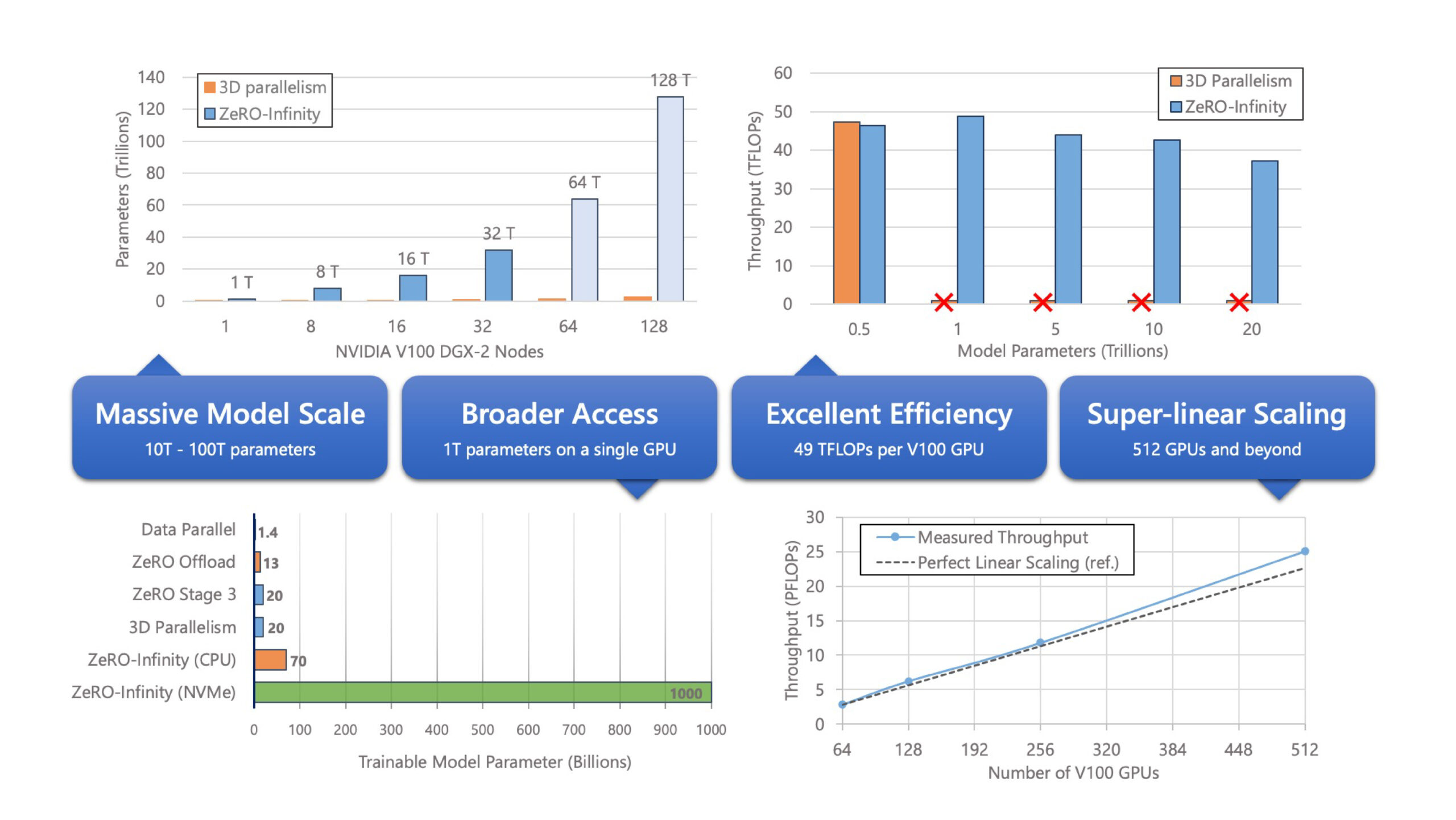

ZeRO-Infinity and DeepSpeed: Unlocking unprecedented model scale for deep learning training - Microsoft Research

![Composer ] Paramètrage d'une carte graphique NVIDIA pour Solidworks Composer | A-S3D Composer ] Paramètrage d'une carte graphique NVIDIA pour Solidworks Composer | A-S3D](https://www.logiciel-cao.com/files/blog/entries/blog-illus/paneaunvidia.png)

![Pilote-Virtuel.com - Forum de simulation aérienne / [X-Plane] FPS et Paramètres 3D NVidia Pilote-Virtuel.com - Forum de simulation aérienne / [X-Plane] FPS et Paramètres 3D NVidia](http://www.easy-upload.net/fichiers/nvidia.2017101205655.jpg)